Chapter 8

Tree Testing: Your Guide to Improve Navigation and UX

In the previous chapter, we talked about how you can conduct a card sorting session to solve issues related to your information architecture (IA). Card sorting is typically complemented by tree testing to validate the identified categories. In this chapter, we delve into this research method to learn how it can help you create a better user experience.

What is tree testing?

Tree testing is a UX research method used to evaluate the navigability of your site or app and the findability of topics on it. It consists of presenting your site architecture in plain text labels or category labels organized hierarchically. Put simply, users are asked to find something on your site—maybe a certain feature or product—using a deconstructed outline of your site or app. It’s an important step in understanding if users can find what they need on your product.

A tree testing example from Maze

Sometimes tree testing is also described as ‘reverse card sorting’ because, while tree testing is used to assess the findability of items in a given structure, card sorting is used to understand how users would naturally categorize information. Both methods give you actionable insights to improve the UX or assess the navigation of your product.

Tree testing is a highly valuable exercise to get a clear view of what real users expect as topics in the navigation of a website and how these topics are clustered from primary to secondary. Tree testing should be the starting point for designing better digital applications.

Mario Tedde, Senior UX Researcher at FedEx Express

What is tree testing used for?

From redesigns to site migrations, tree testing can be used to challenge your assumptions before developing a product or making any significant changes.

Some of the questions tree testing can answer include:

Common use cases for tree testing

Whether you're launching a new product or managing a content-heavy platform, tree testing can help inform design decisions. Some key moments to use tree testing are:

- New product development: Whether you're developing a new website, mobile app, software suite, or digital platform, your product's information architecture is a critical element. Tree testing allows you to evaluate and validate your proposed IA's usability, before investing time and resources into development.

- Website redesigns or site migrations: If it’s time to upgrade, move, or rebrand your site, you need to ensure that the new or restructured site remains intuitive, and important functionalities don't get lost in the process. Tree testing will help keep your users in mind and ensure the changes made improve navigation and findability.

- A/B testing different IAs: Let’s say you’ve got a software suite but, as new features are added, users complain they can’t find what they’re looking for. Your team shares different proposals for how to reorganize the features, and with tree testing, you can evaluate each proposed IA without having to fully implement it first.

Tree testing examples

Before we get into how to conduct your own tree test, let’s take a look at some examples of how you can use tree testing:

- A TV streaming service may task participants with locating where they can upgrade their account plan to add more devices.

- An online grocery store could ask users to find how to change their delivery time slot or add a new payment method.

- A bus service app might set participants the goal of finding where they can see the next bus stopping at their local station.

- A tech company may request users navigate to the settings page of their laptop and find where to change their screensaver.

What are the benefits of tree testing in UX research?

Tree testing is an excellent method to evaluate the effectiveness of your website or app’s navigation hierarchy early on in the design process. The data collected from a tree test helps you understand how users navigate your site so you can better organize your content. Let’s break down all the benefits in detail:

1. Test the usability and effectiveness of your navigation

As you’ll see from the examples above, you can use tree testing to identify navigation problems such as confusing labels, redundant pages, or dead-end paths that prevent users from finding what they need, signing up, or activating. This involves assessing whether your navigation successfully supports the overall goals of your website or product. If users take a long time to locate these items, your navigation may not effectively support your site's goals.

2. Assess the labeling and language used in your website or app

The labels you use for your categories, menus, and other navigation elements help users traverse your site or app. Tree testing allows you to test your labels in a stripped-down context, devoid of visual design, without actual content (like the text on a page or items in a list), and interactive elements (like buttons or links) that might otherwise influence users' interpretations. It’s an honest and bare-bones assessment of whether your labeling works.

3. Gather insight into user mental models

A mental model refers to a user's internal understanding and expectation of how something works based on their past experiences and assumptions. These heavily influence how users approach and interact with a product—what they expect to find, where they expect to find it, and how they expect it to behave. So, the more you understand users’ mental models, the more intuitive and user-friendly you can make your product.

Tree testing helps you gather insight into people’s mental models of a product and how they would naturally think about exploring it.

Melanie Buset, Senior UX Researcher at Shopify

4. Prioritize issues for iteration

By analyzing how users interact with your 'tree', you can identify specific areas of your structure that are causing confusion or difficulty—categories that users frequently misunderstand, labels that are unclear, or items that are hard to locate.

But not all issues are equally impactful. While some issues cause significant difficulty for many users, others might be minor nuisances that only affect a handful of people. Tree testing measures the severity of different issues through usability metrics like task completion rates, time taken to complete tasks, or users' subjective feedback.

I think many designers underestimate the complexity of navigation design. Even if you already have a standard pattern that you’re leveraging, the information design can make or break your navigation.

Sinead Davis Cochrane, UX Manager at Workday

How to conduct a tree test

Step 1: Create a user research plan and prepare your tree testing questions

As with any UX research method, the first step to running a tree test is to create a research plan and align with stakeholders on the objectives of the research. Plus, defining the research questions and communicating the timeline to the team are also key.

Make sure that everyone is on board and understands what the test implies. For example, if the results come back and show that the current IA is not working, you should be able to allot enough time to make the appropriate changes and, maybe even test again.

Melanie Buset, Senior UX Researcher at Shopify

To conduct a tree test, start by getting your site structure ready, create tasks for your participants, and define the key metrics you’ll record to analyze the data gathered.

Keep in mind that during the tree testing session, only the text version of the site is given to your participants, who are asked to complete tasks to locate particular items on the site. It’s recommended that you keep these sessions short ranging from 15 to 20 minutes and ask participants to complete no more than 10 tasks.

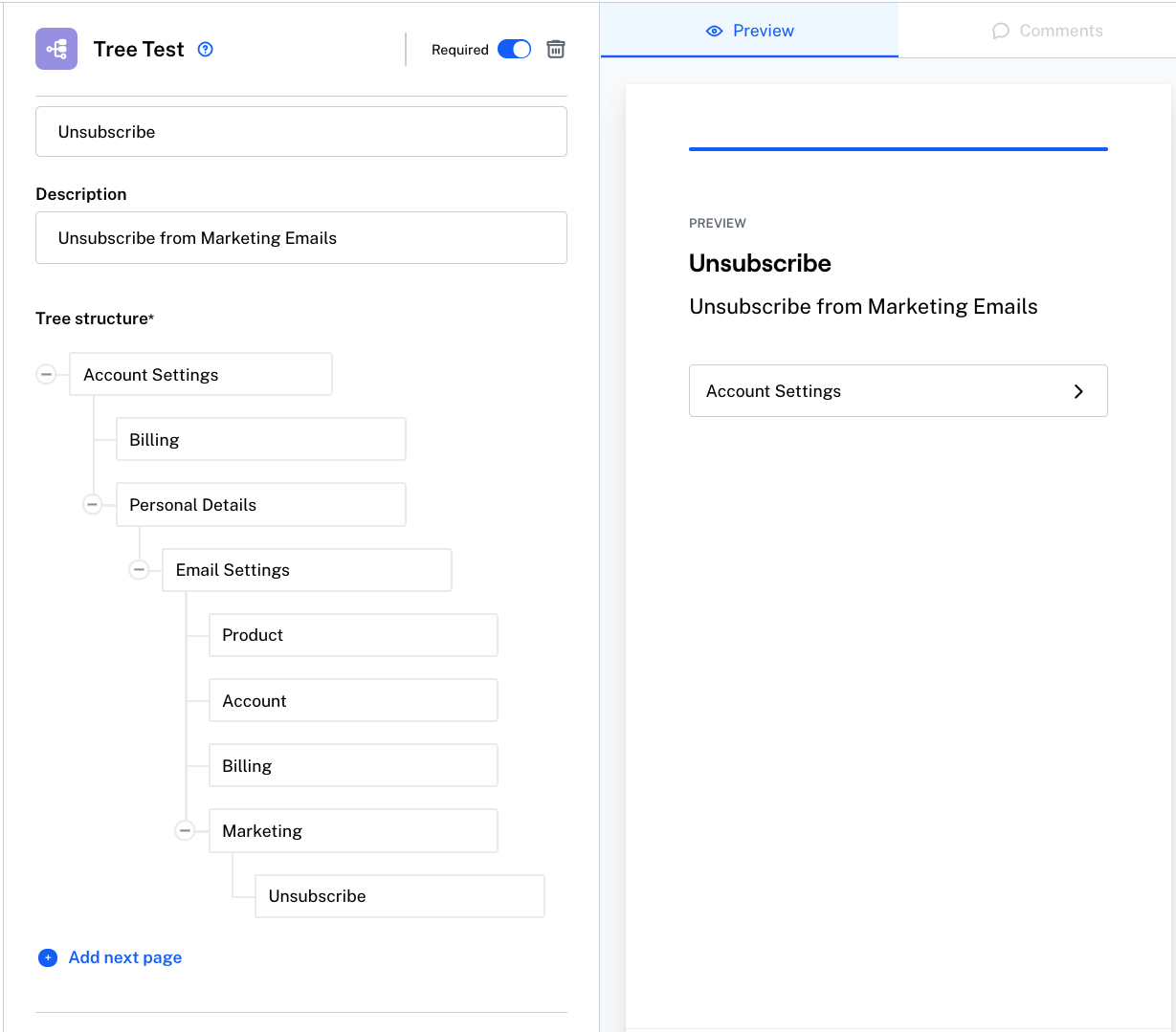

Step 2: Define the tree structure

Begin by outlining the tree structure with the categories, subcategories, and pages in your site or app. Being specific about your subcategories is important because it will prompt realistic user behavior. For instance, a category in the navigation could be called Resources. Respectively, the menu structure for subcategories can be Blog, Help Center, Guides.

Even if you want to test a particular area in your product, make sure your target audience understands how that area relates to the product as a whole. This will enable you to get actionable information you can act on when reviewing your results.

Step 3: Come up with a set of goal-based tasks

Next, create tasks for participants to find a page or location in a tree using a top-down approach. Just like in usability testing, writing good tasks is key when doing a tree test study.

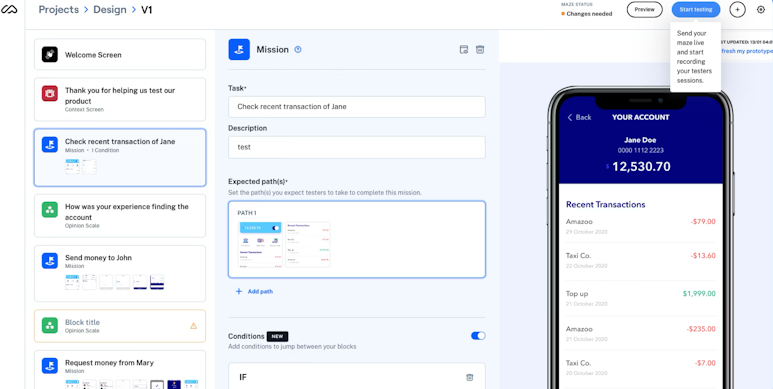

For example, if you want to test the discoverability of the upgrade page in your product, you can create a task that asks participants to find the best way to upgrade the product. Here’s an example of a tree test task:

“You’ve signed up to a 7-day free trial for a budgeting app. You’ve enjoyed using the app and want to upgrade your account. Find how to do that.”

NNgroup recommends that for each task you write, you should also define the right answers that correspond to where the information is located within the tree, so you can automatically calculate success rates for each task.

Other best practices for writing great tasks include making tasks actionable, setting a scenario, and avoiding giving precise instructions to avoid bias.

Tip ✨

When phrasing a task, it's best to avoid matching keywords in the tree.

Typically, tree testing shouldn’t take longer than 20 minutes and it should include up to 10 tasks. For example, Melanie from Shopify shares that for the product she works on, her team had to test the taxonomy structure of a list of services so they wanted to understand what path people would take when navigating different categories of services.

Here’s the task they created:

“Imagine you wanted to hire someone to help set up your business on Shopify; please find which service you’d have to hire for.”

Step 4: Recruit participants

When you’re preparing a tree test, an important element to take into consideration is the participants you will work with. The number of participants depends on a variety of factors like the type of testing you are conducting, your product’s target group, the confidence level you need, and the goal of your project.

Melanie Buset, Senior UX Researcher at Shopify, recommends using at least 50 users when running a tree test so you can identify user behavior trends and clear patterns.

And if you’re using a platform like Maze to run remote testing, you can easily recruit participants based on various filters like industry, language, device, age, gender, and country. This way, you’ll uncover a broader range of user preferences, and gather a more comprehensive understanding of your target audience.

Usually, a good rule of thumb is, once you start seeing pretty clear patterns emerge, then you’ve got enough participants. In my experience, having about 50 participants for tree testing is when you start to see these patterns clearly. It really doesn’t hurt to have more than 50, but I’d say aim for 50 minimum if possible. This also depends on the complexity of the problem you’re dealing with and what needs to be tested.

Melanie Buset, Senior UX Researcher at Shopify

The key thing for selecting the right participants is to spend time understanding your target audience and identifying who will be the most impacted if you were to make changes to your design.

Mario Tedde, Senior UX Researcher at FedEx Express, explains: “Let’s assume that you want to design a website that is going to be used by different types of personas such as psychiatrists, tutors, parents, and young adults that need mental care. If you decide to make one website that will fit all their needs, you need to consider performing tree testing with participants that represent all these personas.”

Step 5: Select the tree testing method

Tree testing can be run in-person or remotely using UX research tools like Maze. With in-person, moderated testing, the advantage is that you can ask participants why they made certain choices—or any other relevant questions that occur to you in the moment.

However, remote testing is advantageous because of its ease of use and speed. You will only need a web browser for testers to participate, and they can do so anywhere and at any time without you being present. Plus, if you’re using Maze, you can use templates to quickly create your tree structure by adding or removing blocks and organizing them according to your product structure.

Moderated tree tests give you the opportunity to figure out the why behind a participant’s actions and identify the rationale behind their decisions. To avoid biased answers, I let the participant do the exercise in silence and only start asking questions when they have completed the task.

Mario Tedde, Senior UX Researcher at FedEx Express

Step 6: Conduct a pilot run

An important step in the process is organizing a pilot test before your official tree test session to see if the test makes sense and works as expected.

Do a pilot test and practice with your team. Once you set up the actual tree test, ask someone to go through the tasks as a real participant would to ensure things will run smoothly.

Melanie Buset, Senior UX Researcher at Shopify

Atlassian recommends doing this by opening up the study to a small portion of participants from your panel. This approach will help you mitigate the risk of missing important details, adjust your instructions, and get more valuable insights for future sessions.

Pilot runs are useful as they bring new perspectives to the study. You will be able to find out what’s missing or what’s confusing and be prepared for the actual session.

Step 7: Running a tree test

This step is fairly straightforward. If you choose to conduct tree testing as a remote, unmoderated study, the testing tool will give you a link to the test that you can send to participants.

You can also follow-up the tree test with survey questions that participants will answer after or before they complete the tasks. The questions can help supplement the user research data you need to know about the participants, such as demographic information or their familiarity with the product.

Once all the participants have completed the test, you can start analyzing the results and making informed design decisions. If you want to test different versions of a tree—say a new version of a tree with the existing one, you can run split testing and compare the results of the new tree to the old version.

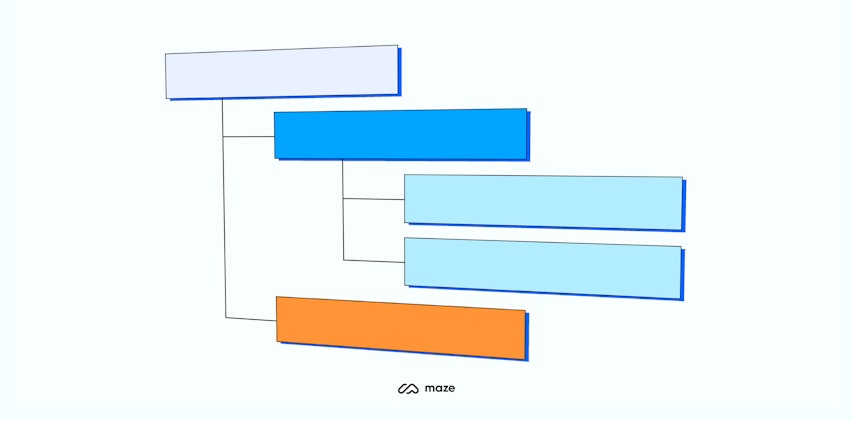

Step 8: Understanding tree testing results

After participants complete a tree test, the results will be recorded in the tool you are using, allowing you to start analyzing them (see how to analyze results in Maze). Typically, the metrics you can analyze for a tree test include success rate, directness, average time to complete a task, and the path taken by users.

- Success rate: the percentage of users that completed the task

- Directness: the percentage of participants who completed the task without hesitation and got the correct answer the first time

- Time: the time it took participants to finish a task

- Path: the routes participants took up and down the tree before selecting an answer

Once you ask your participants to go through the test, the tool will highlight which entries went down the correct path(s) and which didn’t. Seeing where people went off the ‘ideal path’ will help you identify where the navigation issues are within your product.

Melanie Buset, Senior UX Researcher at Shopify

These results often tie back to questions like:

- “Where did participants think they’d find your content?”

- “Did they find the navigation or wording confusing?”

- “What paths did they use first?”

- “Which was the place where they backed up to try a different path?”

- “How long did it take them?”

By analyzing the accumulated data, you can validate or invalidate your hypotheses and design a navigation that makes sense to users.

How to use tree testing with other usability testing methods

Tree testing has several specific use cases, which makes it ideal for certain research objectives—like assessing the findability, labeling, and information architecture of a website or app. Combined with other usability testing methods, you can unlock user insights with additional context and deeper understanding.

Card sorting

There are three types of card sorting: open (users create and name their own groups), closed (users sort cards into predefined groups), and hybrid (users can create/name their own groups, and sort into predefined groups).

In a card sort, users are given a set of cards (digital or physical) representing a piece of content or functionality they must categorize in a way that makes sense to them.

The result is a user-centered view of how content should be organized and labeled, which can form the basis of your initial IA design. Once you’ve drafted the IA, you can then use tree testing to identify any issues with your structure or labels, and make necessary refinements. By using these methods in tandem, you ensure your site's IA is both informed by user expectations (through card sorting) and validated by user behavior (through tree testing).

Card sorting in Maze

First-click testing

To optimize the usability of your website, you need to first start with tree testing. While users are performing tasks, pay special attention to their first click—which category or option do they choose first when trying to find an item or complete a task? This is the 'first click.'

Generally, users that make a correct first click are more likely to complete their task successfully. If users make incorrect first clicks, something about your structure or labels may be misleading. Use this insight to refine your structure and make it more intuitive. Once you’ve adjusted your website, you can run another round of tree testing to check if users' first clicks (and overall success rates) have improved.

A/B testing

Combining A/B testing with tree tests can help evaluate the effectiveness of the different versions of your website’s IA. These versions can have variations in category labels, item organization, depth of the navigation tree, or any aspect of the IA you want to assess and optimize. Run a tree test by dividing your participants into groups and assigning them a series of tasks, then compare the data to identify the better-performing IA.

After you’ve implemented the results into your live environment, you can run an A/B test to confirm the results. Show some users the old version of your IA (as a control) and others the new version. You can then compare user behavior and key performance indicators (KPIs) across the two versions to validate the effectiveness of the new IA.

Usability testing with prototypes

Tree testing is typically conducted in the early stages of product development after you've designed a draft version of your IA, but before you've created any detailed designs or prototypes. This is where you identify and resolve any problems with your structure or labels early on, before you invest time and resources into detailed design work.

But, tree testing is just one part of the larger usability testing process. Once you've refined your IA based on tree testing, the next step is to create a prototype. Prototype testing helps you validate design decisions, and gain a deeper understanding of how users navigate and engage with your product. It provides a realistic and interactive environment for evaluating the user experience, enabling you to make iterations based on user feedback.

Setting up a usability test on Maze

What are the disadvantages of tree testing in user research?

Just as with all UX research methods, tree testing has ideal use cases and situations where an alternative method may be more suited. One of the benefits of tree testing is its tight focus on evaluating IA and navigation, but this can also be a disadvantage, depending on your objectives.

Tree testing tests the top-down organization of a site and the labeling of features, but this means you won’t get any feedback on other areas of navigation or the overall usability of the site, as you may with other research methods.

However, this can be easily mitigated by supplementing tree testing with additional types of research, such as card sorting or basic usability testing, to build a wider picture of any issues with your site’s design and structure.

The other main downfall of tree testing is that it often works best as a remote, unmoderated study. This does, however, mean that you miss out on qualitative data such as comments users may make while conducting the test or wider context for how users approach the task at hand.

In the next chapter, we go over the five-second testing research method, which you can use to measure how well a design communicates a message.

Frequently asked questions about tree testing

Is tree testing qualitative?

Is tree testing qualitative?

Tree testing can be qualitative or quantitative, depending on what metrics you collect. The quantitative aspect of tree testing comes from metrics like task completion rates, time taken to complete tasks and user paths. On the other hand, you can gather qualitative insights from observing users' behavior, follow-up questions, or interviews after the test.

What questions to ask during tree testing?

What questions to ask during tree testing?

During tree testing, you can ask questions like:

- Which category would you click on first to find [specific item or piece of information]?

- Where would you expect to find information about [specific topic]?

- If you were looking for [specific item], where would you go?

- What do you expect to find under this category?

- What does this label mean to you?

- Why did you choose this path to find [specific item]?

- Was there anything confusing about the labels or categories?

- Did you have any trouble finding [specific item]?

- How would you describe the organization of the information?

- What would you change about the structure to make things easier to find?

How many people are needed for tree testing?

How many people are needed for tree testing?

The number of people for tree testing depends on the size and complexity of your tree, the diversity of your user base, and the resources available to you. But a general rule is to have at least 50 participants for each unique group of users you're testing. If your user base is very diverse, or if your tree is particularly large or complex, you might benefit from having more participants. Remember, the quality of your participants is just as important as the quantity.