Chapter 8

Understanding remote usability testing: A beginner's guide

This chapter looks at the remote approach to usability testing. Learn how to run effective remote tests and get actionable insights to make informed decisions.

A couple of decades ago, every usability test involved a consultant inviting a participant to complete tasks on a clunky desktop computer, then observing and noting their behavior. There was no such thing as 'in-person' or 'remote' usability tests, as everyone had to be in the same room to do any kind of UX research.

But since then, a steady flow of technological innovations and new testing tools has made remote usability tests a viable option. Nowadays, it's not uncommon for remote testing to be the preferable route to take.

Let's look at what remote usability testing is and how to best use this UX research method for improving user experience.

What is remote usability testing?

Remote usability testing is any testing that happens when the participant and the researcher are in separate locations. Remote testing has increased in practice with the advancement of technological innovation and is facilitated by online tools. The sessions are normally carried out through a usability testing platform such as Maze that records people completing the test, collects data, and generates insights that you can put into action right away.

📺 Prefer to watch a video?

Get started with our video on remote usability testing, available on YouTube.

The advantages of remote usability testing

Taking the remote approach to usability testing comes with some big pros:

- Finding willing participants is much easier, as users can take the test whenever they want, wherever they want

- It’s faster and cheaper to do quantitative usability testing, so you’re more likely to get statistically significant results

- You can test your design on a wider range of people from different parts of the world

- The time between creating the test and getting results is much shorter, which means your team can get data-driven design insights very fast.

Some of the typical advantages that people see with remote usability testing are a broader and often a more diverse population to recruit from, rather than just the people who are near your office and can leave work in the middle of the day. There’s also less overhead involved in running the study, since they tend to require only a device and internet connection.

Behzod Sirjani, Founder of Yet Another Studio

Remote tests are easy to arrange as participants can take them whenever and wherever they want. You could share a link to a test on social media or in an email campaign and get actionable feedback on your design in minutes. This is a massive benefit over in-person testing, as you can test a lot more people in a lot less time—and with way less effort.

Remote user testing also works well with quantitative testing because it allows you to get data from a large sample size of test participants. You can measure the performance of your design or track usability metrics easily as the data can be recorded automatically, without the hassle of in-person sessions.

There’s also an argument that people behave more real when taking a test in their natural environment—so your insights will be based on a scenario that’s closer to real-life.

Product tip ✨

With Maze, you can create a context screen and write follow-up questions for participants before, during, and after the test. These allow you to keep testers engaged and get the answers you need throughout the sessions even in an unmoderated environment.

The disadvantages of remote usability tests

One of the drawbacks of remote usability tests is that you have less control over the test environment and procedure overall. For example, when running remote unmoderated testing, if a task is unclear, you can’t be there to explain it in more detail and move them in the right direction.

To minimize these risks, write some introductory context and guidelines at the start of the test, so participants know what to expect. If you’re doing a moderated remote test, inform the participant of the session's goal, and make sure they have every bit of information necessary to understand the context.

Finally, it’s crucial to conduct a pilot for unmoderated remote tests to make sure the way you wrote the tasks is crystal clear. Remote usability testing isn’t less work than in-person testing, it often requires more planning and more communication. So, plan accordingly and make sure the tools and devices you’ll be using are working properly.

When is the best time to conduct remote usability tests?

The short answer is any time during the design process. Remote tests are faster and easier to conduct than in-person tests, so you don’t need to think as hard about whether they’re a worthwhile investment.

Once you start working on your design, conducting short, sharp remote tests to validate specific design features ensures your iterations are always backed by data. And since they’re easy to create and distribute, unmoderated tests are particularly useful for frequent testing if you’re short on time or money.

For the design team at Tiendanube, switching to unmoderated testing with Maze cut their testing process from weeks to days:

With in-person testing, it was very expensive for us to learn about our new designs. Now with remote user tests, we can move forward in one or two days.

Damián Horn, Head of Design at Tiendanube

By sharing a link to their test in user testing WhatsApp groups, they can test their designs with users around the world—and get results fast to support their design process.

6 tips for creating an effective remote usability test

To really see the benefits of remote usability testing, you have to set up your test in a way that’ll get actionable results. Remote testing doesn't mean less preparation or planning. On the contrary, it requires setting up the test in the best way to get effective results that will help you learn and act on the data collected. Here’s our top tips for making a test that gets the data you need—fast.

1. Pick the right type of test

There are two types of remote usability testing: moderated and unmoderated.

Moderated tests work much the same as traditional in-person usability tests, except the moderator and the user aren’t in the same room. Instead, the moderator observes the user on a video call using Zoom or similar software.

The advantage here is that you can still ask the user as many follow-up questions as you want, which means you can potentially get more varied answers from each session. Also, the user doesn’t need to trek to your office.

The downside is that it still requires a level of commitment on the user’s end—they have to do the test at a certain time with a specific setup, and the extra questions make it a more time-consuming experience all round. So you’ll probably get fewer takers, and they all need to be in your timezone. Unless conducting user tests at 3 am is your thing.

Unmoderated tests don’t have any back-and-forth between you and the user during the test. You create the tasks and write the questions in a usability testing platform like Maze, send them to users, and then they complete the test alone. The platform feeds the results back to you when they finish.

The good thing about unmoderated tests is that they only take a few minutes for users to finish, so it’s way less hassle for them. This potentially means a lot more people completing the test. You also don’t need to schedule or attend the test yourself, so more tests can be completed in less time. All this adds up to faster results for your team with less effort.

But since no one watches over unmoderated usability tests, you can’t be there to make sure that users reach the end—or that they don’t wander off for a Twix halfway through. So choosing the right scope for your test is key.

Takeaway

For a more traditional usability testing process, go with moderated remote testing. For a quantitative, time-saving approach, try unmoderated remote testing.

2. Narrow your scope

The scope of your test can make or break its chances of success. Obviously, you’ll want to test every inch of your product at some point. But with remote testing, it’s better to test specific flows than to throw everything into one mega test. There are a couple of reasons for this.

First, focusing your test on a few hypotheses will make your results much clearer one way or the other. The more designs you try to test at once, the longer your test—and the more blurry your results. Giving people fewer options lets you pinpoint design decisions and test them more rigorously.

Second, the shorter the test, the more likely the user is to finish it. This is especially important for unmoderated tests, as you can’t be there to guide their progress. And if they don’t make it to the end, you get distorted results. We recommend seven or eight tasks for unmoderated remote usability tests. The good thing is that distributing them is as easy as sending a link, so you can run small tests more frequently.

Moderated tests can be a little more complex, as you can have full conversations about what people are finding difficult and make notes on how they get stuck. And since the tests take more work to schedule, you might want to dig a little deeper to make the most of each one. Still, you should always keep tests on the short side to respect people’s time.

Takeaway

To get clearer data patterns—and to make sure people finish—unmoderated remote tests shouldn’t be longer than seven or eight tasks. Moderated remote tests can be less focused, but the same principle applies.

3. Start looking for participants ASAP

Or even better, you already started looking. Because while it’s definitely easier to find people willing to take remote usability tests than in-person ones, it still takes time, especially if you need users with a particular background or job title. Remember that one of the main benefits of remote usability testing is being able to test with large sample sizes. So the more users you can find, the better.

The earlier you find the right target audience, the earlier you can start testing in the design process. This could save a lot of pain undoing your hard work later down the line, as your product will be user-centric right down to its foundations.

Here’s a few places to start your search:

- Ask your customer success team to hook you up with your most active users—and most vocal feature requesters. They’ll have great insights and will be happy to help. And your CS team will thank you for making people feel heard.

- Reach out to specific segments of users in your email list who’ll be interested in testing a feature that’s relevant to them

- Create a pop-up on your site or in-app asking people for their feedback

- Post on social media to give your fans a chance to shape your product

- For new product releases, encourage early email subscribers to become beta testers

- If you lack time and users, you can hire test participants from a usability testing tool that has a user testing panel

Whatever method you try, start building a pool of users ready for testing as soon as you need them. If you leave it to the last minute, finding people can become a painful blocker in the usability testing process.

Takeaway

Finding users is the biggest potential bottleneck for remote research. So start looking as early as possible and make sure you've got a list of test participants that are open to giving you feedback for when you need to start testing.

4. Create extra-clear usability tasks

Clarity is super important when you create tasks for a remote usability test. Well-written and structured usability tasks will get you more accurate results.

Here’s our top tips for task creation:

- Define your user’s goals: The goals your users have in mind when they use your product should influence the way you phrase your tasks. Make sure to research your users’ thinking before you get started.

- Give people context: Tell people about the test beforehand—what project it’s for, the kind of data you want, that you’re testing the design, and not them. That'll help them interpret what you’re asking them to do.

- Start with something easy: Give people a chance to get used to the testing process and the product with a simple opening task.

- Use plan, concise language: Avoid using internal terms or technical jargon that might confuse people. If there’s a copywriter in your team, ask for their feedback.

- Set one task at a time: Let users fully focus on one task before giving them another one. This will prevent them from getting overwhelmed and help you pinpoint exact design flaws in your data.

- Go with the flow: Make your test resemble a common flow of actions in your product, so your test is more realistic.

- Write actionable tasks: Use imperative verbs that prompt users to act. E.g., create, sign up, complete.

- Don’t reveal the answer: Give people scenarios, not directions. E.g., "You need to see a doctor. Book an appointment with this app." Click on and go to are too precise—the goal of usability testing is to see if people can complete a realistic task with your product without reverting to instructions.

Takeaway

How you write and structure the tasks will largely determine the success of your remote usability test. Use simple language based on your user’s goals, and avoid making your tasks too complex—or too obvious.

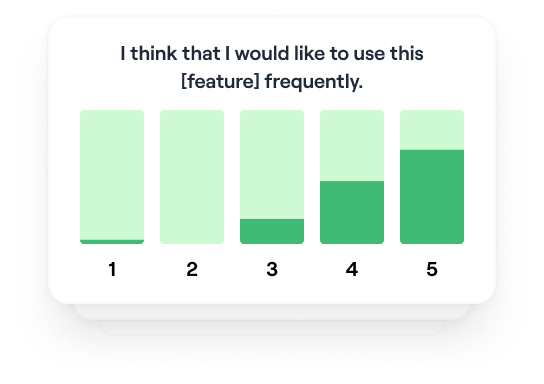

5. Ask effective questions for richer insights

Questions are a way to get more feedback out of your remote usability test. Even if you’ve gone for unmoderated testing, usability testing software like Maze lets you ask research questions before and after each task and at the end of the test.

Follow up with participants after each task to get people’s opinion on specific design elements, or ask general questions at the end of the test to get qualitative feedback.

Since you’ll need to write the questions in advance for a remote test, they need to be word-perfect. Here’s a few pointers:

- Ask pre-test background questions: Segment your users according to their technical know-how and product habits. This is useful when you’re analyzing data later on, as you can identify trends in how different audiences use your product.

- Avoid leading questions: For mid-test questions, don’t ask ‘how easy’ or ‘how useful’ a design was. This plants an idea in your user’s head before they’ve answered, which will skew your data.

- Leave open-ended questions until the end: Asking how someone’s overall experience was can get you detailed, qualitative answers. But keep these questions to a minimum, as they take longer to answer.

And here’s a few examples of well-written usability test questions:

- How was your experience completing this task?

- What did you think of the design overall?

- How was the language on this page?

- What do you think about how information and features are laid out?

If you want some more inspiration, you can take a look at the questions on the System Usability Scale (SUS). It’s a tried-and-tested usability survey that’s frequently used to measure product usability. The SUS even gives your product a usability score at the end.

You can also ask demographic questions to segment your users by age, occupation, education, etc. This is useful for spotting usability trends for different groups of people, but keep in mind that asking people personal questions can make them feel awkward. So ask carefully, and make demographic questions optional—or you could risk people bouncing before they start.

Takeaway

The wording of your questions has to be very precise for remote user research because you only get one shot. Triple check them before you send out the test.

6. Run a pilot test

Great researchers know that you have to test everything. And that includes testing your usability test. The last thing you want is to send your remote test to 100 people, then realize there’s a task-destroying typo in the first sentence.

So share a pilot test with colleagues and get their feedback on what could be improved. Get people from different teams to try it out, as copywriters will see it with different eyes to the customer success team. Colleagues outside your own team will also experience it totally fresh, so their perspective will be closer to your users’.

If you're running a moderated test session, make sure your internet connection works well and your setup is ready. You have probably already prepared everything in the user testing tool of choice, but also make sure other tools like video conferencing or note-taking apps are set up properly before you start the testing session.

If you're running an unmoderated test, send it in batches—not all at once. There’s a good chance you’ll realize something is off after the first batch or two. This way, you’ll be able to fix it and avoid any major embarrassment.

Kickstart remote usability testing with one of Maze's templates:

Frequently asked questions about remote usability testing

What does remote testing mean?

What does remote testing mean?

Remote testing is any testing that happens when the participant and the researcher are in separate locations. In a remote test, the participants complete the tasks in their natural environment using their own devices. The sessions are facilitated by online tools and can be moderated or unmoderated.

How can I test an app remotely?

How can I test an app remotely?

You can choose between two types of remote usability testing: moderated and unmoderated. The sessions are typically carried out through a usability testing platform such as Maze that allows you to share a link to your product or prototype, record people completing the test, collect data, and generate insights that you can put into action right away.

What are the best practices for remote testing?

What are the best practices for remote testing?

When running a remote usability test, you first need to choose which method between moderated and unmoderated remote testing is more appropriate for you. Once this is clear, we recommend focusing your test on a few hypotheses—your results will be much clearer, and users will be more likely to finish the test. Also, start looking for participants as early as possible to make sure you've got a list of users ready for testing as soon as you need them.

Clarity is super important in a remote usability test. So, make sure your usability tasks are extra-clear by using simple language and avoiding making them too complex. You can run a pilot test and share it with your colleagues to see what could be improved before you test with users. Finally, during the test, remember to follow up with participants after each task or at the end of the test to get richer insights.