A Beginner's Guide to Usability Testing

Usability testing is a proven method to evaluate your product with real people. In this complete guide to usability testing, we share everything you need to run usability tests and collect actionable insights to create better user experiences.

Chapter 1

What is usability testing? How to evaluate user experience (step-by-step)

Picture this: Your team has spent countless hours ideating and developing your product with the perfect design and functionality. But a nagging question lingers in your mind: "Will users find it as intuitive as I do?".

Usability testing is the unsung hero that reveals authentic user experience. Even if you don’t have any doubts in your mind about how intuitive your product is, it’s crucial to validate your assumptions. Plus, if you return to usability testing throughout the product lifecycle, you’ll catch issues quickly and see how user behavior changes over time. It’s a win-win.

What is usability testing?

Usability testing is a type of user research that evaluates the user's experience when interacting with a website or app. It helps your designers and product teams assess how intuitive and easy-to-use products are.

The usability testing process identifies problems with your product you may have otherwise missed—by asking real users (instead of developers or designers) to complete a series of usability tasks on the product. The results, success rate, and paths taken to complete the tasks are then analyzed to identify potential issues and areas for improvement.

The ultimate goal of usability testing is to create a product that solves your user’s problems and helps them achieve their objectives with a positive experience.

What usability testing is not

Before we can delve into the specifics of usability testing, we need to dispel some misconceptions. User research, user testing, and usability testing are often used interchangeably—but they aren’t necessarily the same.

User research refers to the overarching act of gathering feedback and insights from product users, and using this to inform product decisions. Usability testing is a specific kind of user research done to assess the usability of a product or design.

To help clarify, here are some UX research methods and techniques that don’t qualify as usability testing:

- A/B testing: A/B testing is a statistical method of comparing and testing design variations to identify which the user prefers, and which changes improve performance. A/B testing is about preferences and provides only quantitative data, whereas usability testing is about behavior, and can provide both quantitative and qualitative data.

- Surveys: Surveys can help you collect data from a large number of users or customers, typically in the form of multiple-choice questions, sentiment, or ratings. Surveys are great for understanding how users feel about a product, but don’t show you how they interact with it.

- Focus groups: During a focus group, a moderator guides the discussion and asks open-ended questions to encourage participants to share their opinions and ideas. The goal is to gain insights into people's attitudes and experiences related to the topic.

- User testing: This is a broad term that can either refer to user research as a whole, or specifically the process of testing products and ideas with real users. The latter takes a quantitative approach to collect user feedback, and typically comes before usability testing. It doesn't give you qualitative data about why users struggle to complete tasks.

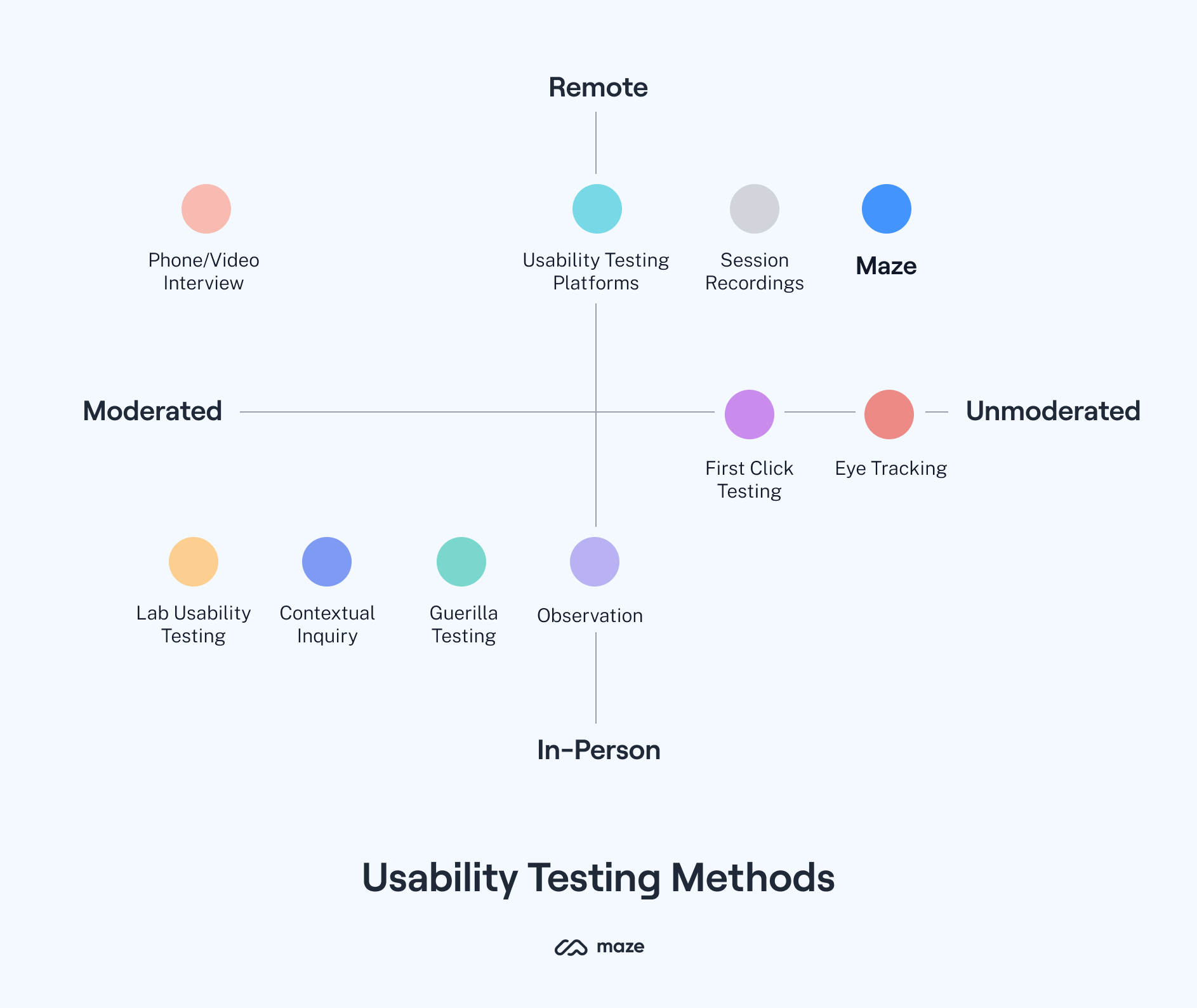

Types of usability testing

The kind of test you want to run will help you choose the right usability testing method. All product research and testing broadly falls into three main categories:

- Qualitative or quantitative

- Moderated or unmoderated

- Remote or in-person

If you prefer to learn in video form, take a look at this handy video for a rundown on the different types of usability testing.

1. Qualitative or quantitative

Any user research will fall into the category of qualitative or quantitative. You ideally want usability testing to gather both kinds of data, to provide a rounded evaluation of the user experience.

Qualitative usability testing focuses on the ‘why’; understanding users' experiences, thoughts, and feelings while using a product. For example, you could conduct a think-aloud study where users verbalize their thoughts while using your product to complete usability tasks. Qualitative data can be gathered from observation, interviews, and surveys.

Quantitative usability testing focuses on collecting and analyzing numerical data like success rates, task completion times, error rates, and satisfaction ratings. It’s about identifying patterns, making predictions, and generalizing findings.

2. Moderated or unmoderated

Moderated and unmoderated usability testing are two different approaches to usability. In moderated usability testing, a moderator guides the users through the test (in-person or remotely). They answer any questions participants may have, ask follow-up questions, and record observations during the test.

Unmoderated usability testing, as the name suggests, doesn’t involve a moderator. Users complete tasks independently, typically using usability testing tools that record their actions and responses.

3. Remote or in-person

Research can be done remotely or in-person, depending on the type of product you're testing and your research goals.

Remote usability testing can be moderated or unmoderated, and is done using online tools or software that allows users to share their screens, record their activity, and provide feedback. It’s useful because your team and test participants can be based in entirely different locations.

In-person usability testing, on the other hand, is conducted in a physical location, usually a usability lab or other research facility. For that reason, it can be more expensive, time-consuming, and limiting in terms of sample size and geographic reach. Many researchers opt for remote research, however in-person testing may be necessary for products that require safety considerations, supervision during use, or physical testing.

Product tip ✨

See how companies like Shopify, Typeform, ElectricFeel, Movista, and Trint run successful usability tests in these real-world usability testing examples.

Types of usability testing method

5 Key benefits of usability testing

Usability testing provides insights into user preferences, motivations, and goals. To help you get the lowdown, we spoke to experts in the research and product space to get their perspectives on how to do effective usability testing.

First up, here are some key benefits:

- Reduce developmental costs

- Tailor products to your users

- Increase accessibility

- Increase user satisfaction and brand reputation

- Combat cognitive biases

1. Reduce developmental costs

When you run usability tests, you save time and money by avoiding costly development mistakes. For example, if you find out users struggle to navigate a specific feature, you can fix this before launch. It's significantly cheaper to make changes before launch than after a product has already been released.

2. Tailor products to your users

“We all have unique backgrounds, lived experiences, perspectives, preferences, and abilities. All of these things influence how we understand, approach, and experience a product,” says Behzod Sirjani, Founder of Yet Another Studio. So, even if you understand your product, your users might approach it differently.

By talking to users directly and observing how they experience your product, you can better understand their needs and tailor the product to work for them—ultimately serving their needs and solving their problems more effectively.

3. Increase accessibility

Accessible products are designed and developed to be usable for as many people as possible—including those with physical, visual, auditory, or cognitive requirements.

Of course it’s important to comply with accessibility standards and regulations, but your product will also benefit from prioritizing accessibility. When you include users with diverse abilities and needs in the usability testing process, you’re contributing to and promoting a more equitable digital landscape.

Discover more best practices for creating products and experiences that are designed for everyone in our Inclusive Design Guide.

4. Increase user satisfaction and brand reputation

“Usability testing is one of the most fundamental ways to test the success of a product. Ensuring customers can find, understand, and use a solution contributes to the overall success of a product and business,” says Taylor Palmer, Product Design Lead at Range.

Usability testing enables product teams to identify potential issues and make improvements before releasing a new product or feature. This can lead to better user experiences, more consistently—creating a loyal user base, and reflecting your overall brand reputation positively.

5. Combat cognitive biases

Our brains love to create shortcuts to make quicker decisions or inferences. They’re just trying to be efficient, but this can lead to subconscious beliefs or assumptions, called cognitive biases.

Usability testing helps combat biases like the false-consensus effect by providing objective feedback from real people, ensuring that design decisions are based on authentic user behavior rather than assumptions or opinions of those already-familiar with the product.

Product tip ✨

Maze allows you to test your prototype and live website with real users, to filter out cognitive biases and gather actionable insights that fuel product decisions.

When should you do usability testing?

You need to carry out usability testing continuously to make sure your product stays relevant and solves your user’s most pressing problems throughout its lifecycle. Here's a quick overview of when to do usability testing:

- Before you start designing

- Once you have a wireframe or prototype

- Before launching the product

- At regular intervals after launch

Usability testing often happens towards the end of the product development process and is seen as a way to 'catch bugs' in the product. That's not usability testing, that's QA. Usability testing needs to happen regularly, at times where you can learn how usable your product is, and take those learnings to make improvements so your product is more intuitive.

Behzod Sirjani, Founder of Yet Another Studio

1. Before you start designing

You can identify user needs, expectations, and potential pain points early on through preliminary research, surveys, or even testing competitors' products. With that data as a benchmark, you'll start the design process with a solid foundation for creating a user-centric product that's even more intuitive to use.

2. Once you have a wireframe or prototype

"I think usability testing is applicable at every stage of the design process. I test at an early concept phase, and once we get the first results, we use them throughout other design phases and re-test with users," says Vaida Pakulyte, UX researcher and Designer at Electrolux.

For example, you could use card sorting and tree testing on your wireframe or prototype to discover how users would approach the product—or make sure the existing design is easy to navigate.

Product tip ✨

If you have a Figma, InVision, Sketch, or prototype ready, you can import it into Maze and start testing your design with users immediately. Get started for free.

3. Before launching the product

At this stage, you already have a working, or almost-working, product. Now it’s time to evaluate its overall effectiveness through summative testing. The goal of summative testing is to measure how well your product performs via specific usability heuristics like efficiency, effectiveness, and user satisfaction. Each testing task should represent typical user scenarios.

4. At regular intervals after launch

Even after your product goes live, it’s crucial to ensure it remains user-friendly, accessible, and relevant over time. With a research tool like Maze, your team can create and run live website testing and discover issues caused by browser updates, third-party integrations, or changes in user behavior patterns.

How to do usability testing

Regardless of your resources or budget, it's possible to set up and run usability tests. Following a consistent process is key to getting started:

- Define a goal and target audience

- Utilize a tool that allows for continuous testing

- Establish evaluation criteria

- Create a usability testing script

- Be mindful of test length

- Run a pilot test

- Recruit test participants

- Run the test with best practices

- Analyze and report your findings

- Iterate and repeat

1. Define a goal and target audience

The point of testing is to deliver a product focused on providing a positive user experience. To do that, you need to understand who your users are. Start by asking questions like:

- What’s your demographic (e.g. age, location, profession)?

- What specific tasks do you want to enable users to accomplish with your product (e.g. checkout process, changing their username)?

- What are your desired timeframes for users to complete tasks and navigate the interface?

Once you have your answers, formulate a problem statement that makes it clear what insights you want to gain. For example: "We want to assess the usability of our product for 20-30 year old customers to complete checkout within 3 minutes." This will help you determine the right research methods and metrics for evaluating the users’ experience.

2. Use a tool that enables continuous testing

It’s worth investing in a tool to facilitate your team to carry out fast, scalable usability testing. We recommend choosing one that supports them to test early and often in the process, including after launch.

This continuous research can inform your design decisions, keep you updated on evolving user needs, and will ultimately help retain users. For example, a tool like Maze supports testing throughout the product development lifecycle, and after launch.

Here’s what you and your team can do with Maze:

- Live website testing: Perform usability testing tasks on a live website or web-based application

- Prototype testing: Integrate with your prototypes from Adobe XD, Figma, Sketch, and InVision to validate design concepts early

- Usability testing templates: Hit the ground running with pre-built templates to save time and ensure your tests are designed to capture relevant insights

3. Establish evaluation criteria

How do you know if your team is evaluating the right thing? Establishing your evaluation criteria will help focus your team on specific elements of the user experience.

Depending on the goal of your test, consider evaluating usability metrics like:

- Task completion rate

- Direct and indirect success

- Time-on-screen

- Time-on-task

- User satisfaction score

- Misclick rate

For example, to measure user accessibility during a checkout process, you can review metrics like the time it takes for users to complete the checkout and overall success rate (i.e. percentage of successful purchases). It’s also a good idea to establish benchmark test results using earlier prototypes or competitor products, so you can track user performance as your product evolves over time.

4. Create a usability testing script

A well-crafted usability testing script outlines the tasks to be performed, instructions to be given, and questions to be asked. It serves as a roadmap for the test participant and provides an opportunity to identify any potential problems or areas of improvement. Head to chapter five for an in-depth walkthrough on creating your usability tasks and script.

5. Be mindful of length

Keep your tests short and sweet. When it comes to test duration, it depends on factors like the product's complexity, the scope of the testing objectives, and participant engagement level. But, as a general guideline, usability tests are an average duration of 15–20 minutes with 5—10 tasks. It should be short enough to maintain participants' focus, while giving your team sufficient time to gather meaningful insights.

6. Run a pilot test

If you want to nail usability testing, getting your team to do a pilot run is a smart move. Ideally, your test participant should be someone inside your organization, but not directly involved in your project, as they might have preconceived notions about how the product works. Otherwise, you could test a small batch of participants who represent your target user.

The pilot test is where you evaluate the clarity and effectiveness of your testing materials, like the script, tasks, and instructions. You can also validate the feasibility of your chosen testing methods, tools, and environment. Think about it as the usability test for your usability test.

7. Recruit test participants

“No matter how much time you spend on ideation and prototyping your design solution, you should always test it with real people,” says Nick Babich, Editor-in-chief of UX Planet. You must recruit participants that represent your target audience and have similar backgrounds, preferences, and experiences as your intended users.

Here's how you can hire participants in three easy ways:

- Invite them: You can invite participants directly from your website or browser-based products using popover invites. If you’ve created tests and surveys with Maze, you can use In-Product Prompts to embed surveys, tasks, or recruit relevant participants.

- Recruit existing users: If you’re testing an existing product, you can source testers from your current user base. With recruitment tools like Reach, you can directly create participant databases from your CRM, segment them, and send personalized emails.

- Recruit from a panel: You can also hire participants quickly from service providers like the Maze Panel which has 121,000+ users across the globe.

When recruiting participants, consider their availability and willingness to participate in the session. Screen participants based on your criteria and avoid those who aren’t a good fit for the test.

8. Create a positive testing environment

Usability testing should feel like an interactive conversation, not an interrogation. To get the most out of your usability test, be sure to brief your team on how to interact with participants and reduce bias. Your researchers should avoid leading questions that might influence participants' behavior—and each participant should feel comfortable providing honest feedback.

Take a look at this video for some usability testing best practices:

9. Analyze and report your findings

When you transform raw data and participant feedback into actionable insights, you'll be able to identify user pain points, prioritize UX improvements and communicate them effectively within your team.

Some metrics you should be tracking include:

- Usability metrics like total testers, bounce rate, misclick rate, and average duration

- Mission paths for how participants interacted, and optimal path analysis

- Success metrics based on how many testers deviated from the intended flow

- Closed and open questions with a visual representation of responses

Product tip ✨

Download your Maze Reports or embed them into your favorite tools like Airtable, Coda, Confluence, FigJam, Jira Cloud, and Miro.

10. Iterate and repeat

Usability testing is an ongoing process that requires iteration, feedback loops, and revisions throughout the product lifecycle. You can test different versions of a design and analyze the results to determine which one works best. As your product and audience grow, it's important to re-evaluate your usability testing process and adapt it to accommodate new goals, user segments, and market conditions.

Transform your product experience with usability testing

Usability testing can help you create user-centric products that deliver exceptional experiences. When you understand user behavior, preferences, and needs, you can create intuitive products your customers will love.

When done correctly, usability testing can help your team:

- Identify user pain points and UX issues

- Generate ideas for feature improvements and product updates

- Prioritize design decisions with data-backed insights

- Validate assumptions and understand users’ context of use

- Develop better products faster

If you're unsure about how to implement usability testing, try using a continuous product discovery tool like Maze. Your team can create or upload existing prototypes, assign tasks and questions to testers, recruit participants, and even download reports with actionable insights—all from one central hub. And if you need more resources, Maze University provides free tutorials, feature updates, and more to help you get started. Happy testing!

Head on to chapter two to dive into the difference between moderated and unmoderated usability testing–and when to use each type.

Frequently asked questions about usability testing

What are the six types of usability tests?

What are the six types of usability tests?

The six types of usability testing are:

- Qualitative or quantitative testing: Qualitative testing involves gathering subjective feedback from users about their thoughts and feelings, while quantitative testing gathers numerical data to analyze user behavior

- Moderated and unmoderated testing: Moderated testing involves a facilitator being present while the user completes usability tasks. Unmoderated testing involves users completing tasks independently

- Remote or in-person testing: Remote testing involves users completing tasks from the comfort of their own homes or workplace, while in-person testing involves users completing tasks at a centralized research location

What’s the difference between user testing and usability testing?

What’s the difference between user testing and usability testing?

The difference is that user testing is a broad method of research which evaluates the user experience from a holistic perspective. Meanwhile, usability testing is a more specific type of user research, used to evaluate how easy it is for users to achieve their goals using your product.

- User testing involves gathering feedback on various aspects of the product or service, such as design, content, and functionality. Interviews, surveys, focus groups, and usability testing are a form of user testing.

- Usability testing is typically conducted at various stages of the design process, from initial concept to post-launch evaluation. It involves asking participants to perform specific tasks related to the product or service while their interactions and feedback are observed and recorded.

How do you write a usability testing report?

How do you write a usability testing report?

To write a usability testing report, start by analyzing the results, focusing on key topics such as user behavior, task completion rate, user satisfaction, and errors made. Then provide a high-level overview of your findings and an action plan for your team.

You can also automatically generate a usability testing report with Maze, which highlights all usability metrics, cart sorting analysis, tree testing analysis, and open and closed question analysis. Plus, you can download the report or embed it into tools like Miro and JiraCloud.

What are the best practices of usability testing?

What are the best practices of usability testing?

The best practices of usability testing are to:

- Challenge assumptions: Don’t assume you know how users will interact with your product. Test it!

- Get consent: Ask your users for permission to observe them during their test and let them know how their data will be used

- Decide what metrics to measure: Based on your goals, decide what data points are important to track

- Run a pilot test: Before launching a full usability test, run a pilot test with internal teams to ensure the test is set up properly and the right questions are being asked

- Be inclusive: Ensure you have a diverse group of participants that accurately reflect your entire audience, including secondary personas

- Recruit diverse participants: Recruit people with different backgrounds, ages, genders, and abilities to get a better understanding of how your product works for different users

If you learn better with video, watch this video on the best practices of usability testing.