Consider this: you’re developing a brand identity, and you’ve sketched important design elements, including the logo, the website, and so on. With the sketches ready, you're sure these elements show your brand’s cheeky personality.

But hang on a second: isn’t this brand perception an idea in your mind? Who’s to say your target audience will feel the same?

Enter: preference tests that can help you pick your users’ brains early on in the design phase. Not only do these tests help you understand your users' perceptions of your brand, but they also help you learn which visual design appeals to them.

Not sure how to conduct a preference test? We’ve put together this guide to tell you that and much more, including what a preference test is, its limitations, and how they differ from A/B tests.

Let’s get going.

What is preference testing?

Preference testing is a research method that involves sharing two-three design variants with participants and asking about their preference – which one they like and why. It can help you learn:

- Users’ brand perception and/or their emotional feel

- A design’s content, visual appeal, and overall trustworthiness

But here’s the key to crushing preference tests: don’t just show your designs to test participants and ask them which they like or find trustworthy. Instead, also ask follow-up questions to understand why. This gives you qualitative feedback, which helps you understand how to improve the prototype based on user preference.

Need to drive home only quantitative data from preference tests? Keep in mind that you can tap into any of the two types of tests that Jolene Tan-Davidovic, Senior User Researcher at N26, shares:

- Qualitative preference tests

“[These] are usually in the form of an interview where a user is shown one or more versions of a design and then asked which one they prefer. The users are also probed on what their impressions are of each design, their attitudes about it, and why they prefer one over the other.”

- Quantitative preference tests

“[These] can take the form of a survey with users selecting which design they prefer and what their attitudes are about each design. This allows us to get feedback from many more users than qualitative tests, enabling us to be more confident that the findings are generalizable to all users.”

That said, Tan-Davidovic warns, “quantitative tests are suitable only when the design is relatively straightforward and doesn’t cut across multiple screens. It only makes sense to conduct a quantitative preference test when we're sure that we understand the reasons why users would prefer one design over the other and the context that the user is facing.”

Why are preference tests important?

Preference tests are important because they give you a direct glimpse into your users’ likes and dislikes and help you understand how your users feel about different visual designs. They allow you to pick your users’ brains early on in the design phase, before you’ve invested too much time and energy into a design that may not appeal to your user.

Unlike other testing, such as concept validation, preference tests focus specifically on the visual aspects of products and design, drilling down into everything from broad perceptions of your brand, to specific visual designs.

As Mitchelle Chibundu, Lead Product Designer at Flutterwave, puts it, preference tests give you “insight into which designs resonate more with your users.”

What is the goal of preference testing?

The goal of preference testing is to understand what visually appeals to your target user, and why. This deep understanding of their design preferences can then be used throughout multiple stages of the design process, from broad planning of color schemes and layouts, through to specific decisions regarding fonts and icons.

When should you conduct preference tests?

Preference tests come in the early design phase “before [you] invest to refine the design,” advises Tan-Davidovic. At this point, you’d “want to understand which is a more viable direction and why.” A preference test can answer that for you.

If you’re planning a redesign, let’s say a website redesign, you can conduct a preference test here as well–testing your design against a competitor’s.

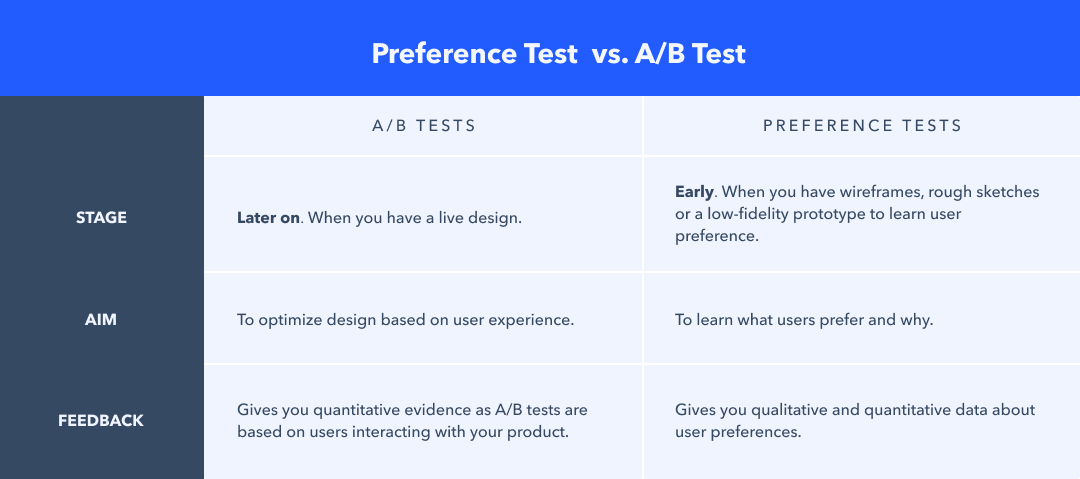

That said, be aware: preference tests aren’t A/B tests. Want to learn more about how the two differ? Read on.

A/B testing vs. preference testing: what’s the difference?

The short answer is: A/B tests come later on in the process when your design is close to ready and users can interact with it in a live setting to give their feedback. Preference tests, on the flip side, help in the early stages when you have a rough sketch or wireframe to share with test participants.

To go into detail, Chibundu explains, “Preference testing is more about understanding what designs the user prefers and why they prefer them before the product is completed.”

Example: You have three different homepage layout sketches for a new product ready. Conduct a preference test here to learn which layout potential users will prefer instead of making assumptions yourself.

“On the other hand, A/B Testing is KPI-based,” continues Chibundu. “It's about finding out if behaviors are being influenced by different variants and how people use a product to achieve a goal.”

Example: Let’s say, there’s a drop in your eStore’s newsletter signups recently. You have a few options to encourage more signups such as different CTA box colors in each design variant. Show these options to users to A/B test their behavior and learn which CTA color gets more signups.

How to conduct preference tests

So you’ve decided to do some preference testing, how do you go about conducting one? Follow these steps:

Step 1: Start with identifying your objectives and gather research material

Do you plan to understand which design variant users prefer? Or, do you want to learn how they perceive each design? Whatever your research objective is, lay it out at the top of your research board so that you can share it with your test participants.

At the same time, settle on which type of feedback you want to gather – is it going to be qualitative or quantitative? Don’t forget to ensure all design variants are handy.

Tip: Try to keep the test variants between two or three because when it gets more than these, it becomes difficult for people to make a comparison.

Mitchelle Chibundu, Lead Product Designer @ Flutterwave

Step 2: Decide how to measure responses

Based on whether you’re conducting qualitative or quantitative preference testing, plan how you’d want test participants to share their preferences.

You could:

👉 Ask open-ended questions where participants explain their choice. For such an interview, spend 15-30 minutes per participant, Chibundu recommends.

As for the questions to ask, Chibundu shares a list:

- Which of these do you prefer?

- Why do you like this?

- How well do you understand what is required of you on this page?

- How clear was the information on this page to you?

- How easy was it for you to navigate this screen?

- What do you like about how this page looks?

👉 Give them a closed list of adjectives. These could be 3-5 words that describe a design variant, for example, clean, minimal, classic, elegant, and so on.

👉 Give test participants an open word choice where you ask them to share 3-5 adjectives/words that they think describe the design variant.

👉 Gather Numerical rating: Get numerical ratings about which design shows particular brand qualities.

Tip: To assess preference, you could ask 'In your opinion, which one would be a better solution?' or 'If you had to decide which one we would build, which would it be?'

Jolene Tan-Davidovic, Senior User Researcher @ N26

“Participants tend to be polite and usually refrain from criticizing the design directly even if explicitly encouraged to be ‘brutally honest’. Because of this, it is better to use indirect methods to ask about their reasons for preferring one over the other.

For example, you could ask them how they would describe or recommend the feature to a friend and then you can take note of the positive and negative things that they mentioned. Or you could ask them where they think other people would have problems with this design,” adds Tan-Davidovic.

Step 3: Gather participants

As is the user testing rule of thumb, you need to find test participants that “reflect your target customers as closely as possible as well as the frame of mind or context needed to understand the design,” notes Tan-Davidovic.

“Sometimes this means that you will recruit existing customers (if it is important that participants understand the usage context), or sometimes this means you want non-customers who nevertheless reflect your target market (because you want ‘fresh eyes’).”

Hence, focus on recruiting test participants, including settling on whether you plan to pay or incentivize participants and where you’d pool target users from.

Wondering what sample size you need for a preference test? Chibundu recommends you aim for 20-30 participants or a larger sample, so your test results carry statistical significance.

Step 4: Conduct your preference test

Now that your test participants, design variants, and research questions are ready, go ahead and explain the process to the participants before you start the test.

If there’s something specific you want their feedback on, tell them about it.

Tip: Ensure that you alternate which design is shown first. This reduces bias as it takes care of the recency effect where the last shown design is more likely to be favored.

Jolene Tan-Davidovic, Senior User Researcher @N26

Step 5: Study your preference tests results

This is an essential step so you can take the insights you’ve learned from the preference tests and improve your design accordingly.

For qualitative data, this would mean you group similar responses and find patterns in those. As for quantitative data, analyze the questionnaire responses to find out the most preferred answer.

If there's a significant difference in the results, it will be easy to determine which design is a winner. If not, you can repeat the process by doing a second test on a new iteration of the design.

Preference test examples

There’s many ways to use a preference test, and lots of situations the insight of ‘which design do users prefer?’ can be incredibly useful.

In case you’re unsure when best to use a preference test, here are some examples:

- Trying to determine which image on a home page your users would prefer

- Wanting to know what color palette users prefer

- Looking to select a new icon for your next product iteration

- Deciding between types of illustration style for your app’s graphics

**Want to get started on preference testing right away? 🤔 **

Check out our preference test templates for web design and marketing assets

Limitations of preference testing

Before we wrap this up, let’s also count the shortcomings of preference testing. Essentially, these limitations surface from a lack of user interaction with your design, leading to the following concerns:

- Since test participants aren’t interacting with your design, you’re asking them to predict the future by envisioning a design that doesn’t exist yet. And, research has proven that humans aren’t good at predicting their actions. Be it predicting their relationship status or deciding whether a design variant will turn out to be user-friendly.

- People aren’t good at explaining the ‘why’ behind their choice as well. This could be because they don’t exactly know why they picked a design, they don’t want to tell you, or they simply aren’t interested enough.

- Lastly, “the judgment is based solely on preference other than usage,” notes Chibundu. “In this case, the feedback will be influenced by personal factors that do not necessarily matter in a live scenario where the user is faced with a task.”

Tying it all together

Here’s hoping you are now confident about what a preference test is and how to conduct one.

Not sure if it’s right for you?

Keep the following in mind: despite their limitations, preference tests have their place in the early design process as they can help you design based on user preference instead of personal guesswork. Preference tests are also easy to conduct and less costly than A/B tests.

Therefore, as Tan-Davidovic summarizes, “conducting qualitative or survey preference tests before an A/B test is launched helps us be more confident that the final design actually solves the user problem and, hence, is more likely to perform better in the A/B test.”

Looking for more reliable data? It’s best you go ahead with usability testing. See some examples too.

Frequently asked questions about preference testing

What is preference testing?

What is preference testing?

Preference testing is a research method which involves participants choosing between 2-3 design variations and explaining their preference. Preference testing is used to understand users’ brand perception and visual preferences.

What is the goal of preference testing?

What is the goal of preference testing?

The goal of preference testing is to understand how users perceive different potential designs and what they feel about them.